Buzz / 05 05, 2017

WHY THE TROLLEY PROBLEM ISN'T A PROBLEM FOR SELF-DRIVING CARS

Trolley Problem-like scenarios are a popular trope when discussing self-driving cars.

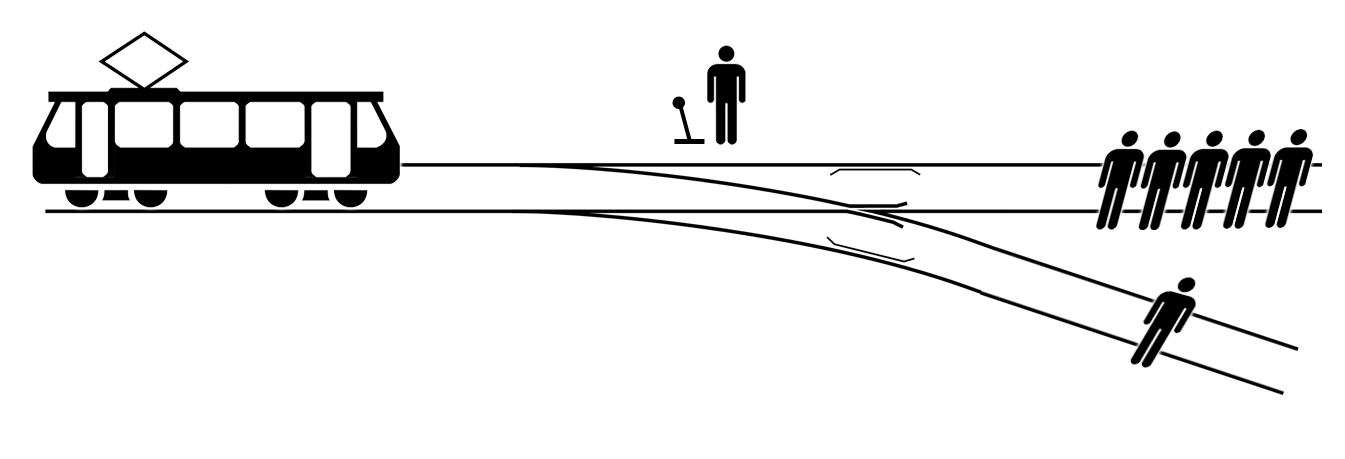

What is the Trolley Problem?

The Trolley Problem is an ethical dilemma, usually involving a life or death scenario. Here is the scene: a trolley looses control, and straight ahead on the tracks is a group of 5 people. You have control of a lever to divert the trolley to another track. Problem is, there is 1 person on the other track. Do you save 5 people or the 1 person? In both situations, people will get hurt. Which is the more ethical choice?

Trolley Problem & Self-Driving Cars

A Google search will come up with tens of thousands of hits, posing scenarios like “Would you program a car to drive off the side of a mountain road, sacrificing the occupant, if a school bus was careening down the mountain in the wrong lane?”[1] It’s an interesting and fun question to think about but unknown to most of the authors discussing it, it isn’t an issue for a self-driving car.

These types of problems are classified as “no-win scenarios.” What should the car do if it’s in a position where there is no possible “right” move? To answer that question, let’s look at a domain where the problem is already solved: Tick-tack-toe.

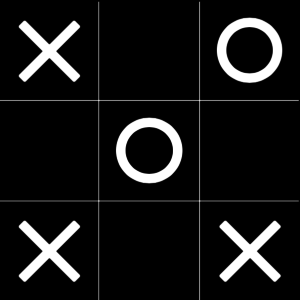

No-win Scenario for ‘O’ in Tick-Tack-Toe

This image is a no-win scenario for O. If the computer is playing O and goes on the left side of the board, X will play on the bottom and win; if the computer plays on the bottom, X will play on the left and win. No matter what it does in this situation, the computer loses.

This image is a no-win scenario for O. If the computer is playing O and goes on the left side of the board, X will play on the bottom and win; if the computer plays on the bottom, X will play on the left and win. No matter what it does in this situation, the computer loses.

Yet we know that a properly designed computer program never loses Tick-Tack-Toe. How can that be? Like the AI Car scenario (kill the occupant or hit the school bus?) this is a no win scenario for the computer. If it can’t win in this scenario, how can it be true that the computer never loses a game of Tick-Tack-Toe?

The answer is the same in both cases… the computer doesn’t allow itself to get into this situation.

“It really is just that simple. The self-driving car will never have to choose between killing a crowd of people or killing its occupant if it never allows itself to be in a situation where that choice will be necessary.”

What About Situations Designers Couldn’t Anticipate?

The typical counter to the above is to ask, “what about situations that the designers couldn’t anticipate?” But as any programmer will tell you, that’s the wrong question. If the programmer can’t anticipate the situation, then there is nothing at all the programmer can do. (S)he won’t be able to tell the car in advance which action to take. Asking how to solve a problem, when you don’t know the particulars, is silly on its face.

So the solution is obvious. Anticipate as much as possible and plan several moves ahead so known no-win scenarios are impossible. As for the unknown no-win scenarios, there is nothing designers can do in such cases, so asking what they should do is a waste.

[1] Washington Post

Daniel Tartaglia is a Senior Developer & iOS Mentor at Haneke Design in Tampa, FL. He has been programming since high school, but only started professionally in the late ’90s. Daniel has worked professionally writing C++, Objective-C, Java Script, Python, Ruby, and now Swift.